LaMDA Models by Google DeepMind

About

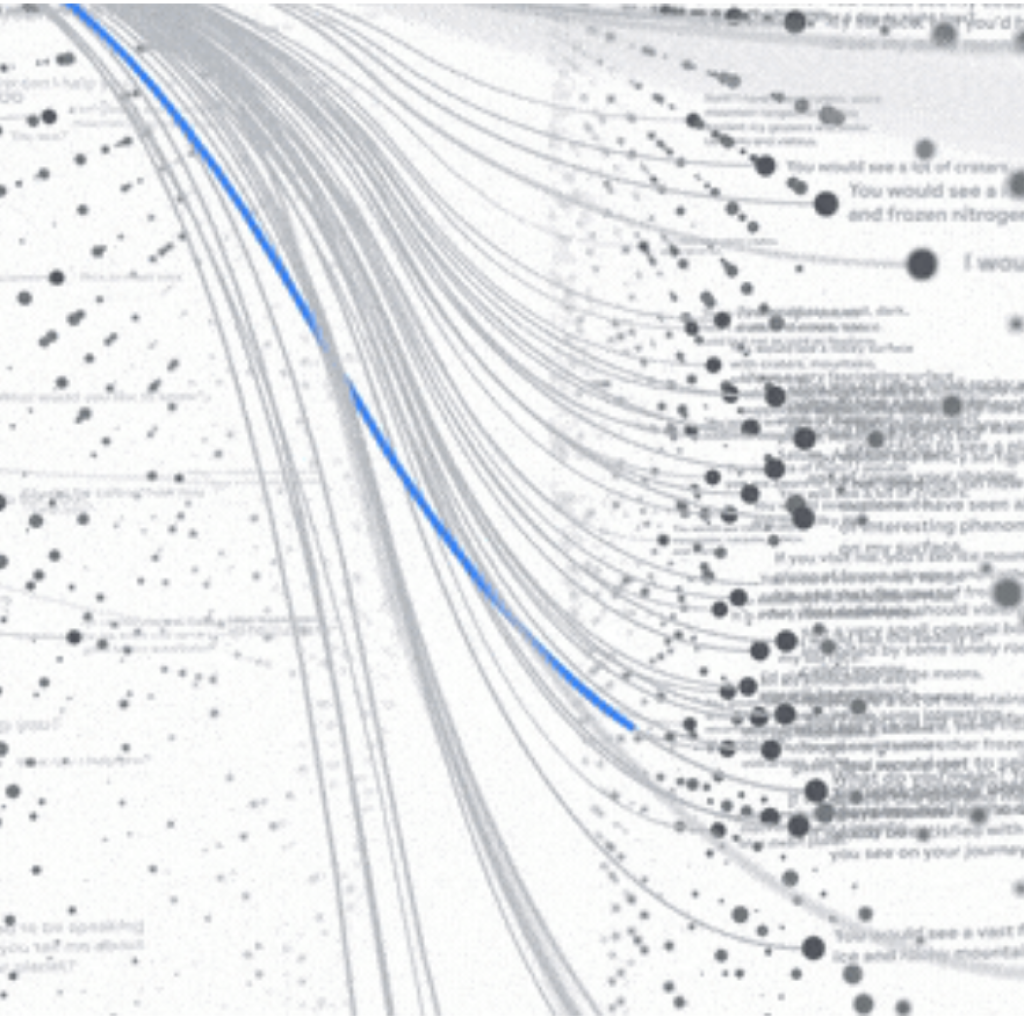

LaMDA, short for Language Model for Dialogue Applications, represents Google's suite of conversational large language models. Developed using the Transformer architecture first introduced by Google Research in 2017, LaMDA is finely tuned for dialogue, making it adept at understanding the nuances of open-ended conversations. This capability arises from its comprehensive training on a diverse dataset of approximately 1.56 trillion words from public dialogues and web text. The most significant iteration of LaMDA features a staggering 137 billion parameters. Google's commitment to safety and factual accuracy in LaMDA is evident through their strategies that include fine-tuning with annotated data and leveraging external knowledge sources. LaMDA is instrumental in powering Google’s products like Bard and has sparked discussions around AI sentience 235.

Current Variants

Use-when guidance is derived from seed capabilities, context, release, and replacement fields.

| Model | Use when | Released | Signals | Status |

|---|---|---|---|---|

| LaMDA 137B | Use when the workload needs 137B parameters. | 2021-05 | 137B parameters | Current |

| LaMDA 8B | Use when the workload needs 8B parameters. | 2021-05 | 8B parameters | Current |

| LaMDA 2B | Use when the workload needs 2B parameters. | 2021-05 | 2B parameters | Current |

Release Timeline

1 release groupSpecifications(3 models)

| Model | Released | Parameters |

|---|---|---|

| LaMDA 137B | 2021-05 | 137B |

| LaMDA 8B | 2021-05 | 8B |

| LaMDA 2B | 2021-05 | 2B |

Frequently Asked Questions

- What is LaMDA used for?

- LaMDA is used for coding. The family description and listed model capabilities point to those workloads as the best fit.

- How does LaMDA compare to Gemma 4?

- LaMDA by Google DeepMind is strongest where you need coding, while Gemma 4 by Google DeepMind is the closest related family to check for multimodal. LaMDA has 3 listed variants, while Gemma 4 reaches up to 256k context, so compare the specs and pricing tables before choosing a production model.

- Which LaMDA model should I use?

- If price is the main constraint, use the pricing table first because LaMDA does not have complete provider pricing in the local data. For the most capable/latest local choice, evaluate LaMDA 137B.